AI vendors are everywhere right now.

Every product claims to be “AI-powered.” Every demo looks impressive. Every sales deck promises efficiency, speed, and competitive advantage. And almost every organization is being pushed, implicitly or explicitly, to pick tools quickly so they don’t “fall behind.”

That pressure is where most bad AI decisions start.

At BizKey Hub, we see the same pattern across industries. Companies don’t fail with AI because the technology doesn’t work. They fail because they choose vendors based on surface-level features instead of operational reality. They buy tools that look intelligent in isolation but collapse when introduced into real workflows, real data, real users, and real risk.

Evaluating AI vendors is not about picking the smartest model. It is about selecting systems that can survive inside your business.

This guide breaks down how to evaluate AI vendors and tooling using a practical checklist and scorecard, grounded in governance, architecture, risk, and long-term viability. Not theory. Not hype. Just the questions that actually matter once the pilot ends and the tool has to perform.

Why AI Vendor Evaluation Is Different From Traditional Software Selection

Traditional software evaluation focused on features, integrations, and price. AI changes that equation.

AI systems behave less like static tools and more like adaptive participants in your operations. They learn from data. They generate outputs that influence decisions. They introduce new failure modes that didn’t exist with rule-based systems.

That means AI vendor evaluation must account for factors most procurement processes were never designed to handle, including:

- Model behavior that changes over time

- Probabilistic outputs rather than deterministic results

- Data lineage and training provenance

- Governance, auditability, and explainability

- Regulatory exposure tied to how outputs are used, not just stored

Frameworks like the National Institute of Standards and Technology AI Risk Management Framework and the International Organization for Standardization ISO/IEC 23894 guidance on AI risk exist for a reason. They recognize that AI risk is systemic, not feature-level.

If your evaluation process looks the same as it did for CRM or project management software, you are already behind.

The Core Question You Should Be Asking

Before getting into checklists and scorecards, there is one framing question that simplifies everything:

What role is this AI system playing inside our business?

Is it:

- Assisting humans with analysis or drafting

- Automating decisions with downstream impact

- Acting as a gatekeeper for approvals or risk

- Operating in the background as infrastructure

Vendors often blur these distinctions intentionally. A tool that “just summarizes contracts” today can quietly become a decision driver tomorrow.

You cannot evaluate an AI vendor responsibly unless you understand where the system sits in your decision chain.

Category 1: Business Alignment and Use-Case Clarity

Start here. Always.

Many AI tools fail because they solve interesting problems instead of relevant ones.

Evaluation checklist:

- Is the use case clearly defined in business terms, not model capabilities?

- Can the vendor articulate measurable outcomes tied to cost, risk, speed, or quality?

- Does the tool reduce an existing bottleneck, or does it create a new one?

- Is success defined beyond “users like it” or “the demo worked”?

Strong vendors can map their tool directly to operational metrics. Weak vendors rely on abstract value statements.

If a vendor cannot explain how their AI fits into your actual workflows, they are selling potential, not results.

Category 2: Data Inputs, Data Ownership, and Data Boundaries

AI is only as good as the data it touches. This is where many evaluations become dangerously shallow.

You need precise answers to basic questions:

- What data does the system ingest?

- Where does that data originate?

- Is customer or proprietary data used for training or fine-tuning?

- Who owns derived outputs and embeddings?

Reputable vendors provide clear, written data handling policies and align with frameworks such as the OECD AI Principles and modern privacy regulations.

Be cautious of vague language like “data may be used to improve services.” That sentence has buried more compliance teams than almost anything else in AI contracts.

Category 3: Model Transparency and Explainability

Not every AI system needs full interpretability. But every AI system needs accountability.

Ask vendors:

- Can outputs be traced back to inputs or source material?

- Is there any level of explainability for high-impact decisions?

- Can the system surface confidence levels or uncertainty indicators?

- How does the model handle edge cases and ambiguous prompts?

Regulators are increasingly focused on explainability for AI systems that affect individuals, finances, or legal outcomes. Research from Gartner consistently shows that lack of transparency is one of the top blockers to enterprise AI adoption.

If a vendor responds with “the model is a black box,” that is not a technical limitation. It is a risk signal.

Category 4: Governance, Controls, and Human Oversight

AI without governance is not innovation. It is exposure.

Every serious AI vendor should support:

- Role-based access controls

- Human-in-the-loop review where appropriate

- Logging and audit trails for prompts and outputs

- Policy enforcement tied to usage context

Frameworks like European Commission AI Act proposals make one thing clear. Governance is not optional.

If a vendor positions governance as something you can “add later,” you should assume it will be painful, expensive, or impossible.

Category 5: Security and Infrastructure Architecture

AI tools often sit at the intersection of sensitive systems, documents, and decision processes. That makes them high-value targets.

Evaluate:

- Where the system is hosted (cloud, region, isolation model)

- How credentials and secrets are managed

- Whether the vendor supports single tenant or private deployments

- How incident response and breach notification are handled

AI vendors that cannot pass a basic security review should not be touching production data, regardless of how impressive their model appears.

Category 6: Integration Into Existing Systems

AI that lives in a silo rarely delivers value.

Strong vendors understand that AI is an operational layer, not a standalone destination.

Key questions include:

- Does the tool integrate with your core systems of record?

- Are APIs well-documented and stable?

- Can outputs be pushed into downstream workflows automatically?

- Is there support for event-driven or real-time use cases?

The most successful AI deployments we see are deeply embedded into tools people already use. Email, document systems, ERP, CRM, and workflow platforms matter more than flashy dashboards.

Category 7: Vendor Maturity and Roadmap Credibility

AI startups move fast. That is both a strength and a risk.

You need to assess whether a vendor can survive long enough to support your investment.

Look for:

- Clear product roadmaps tied to customer feedback

- Evidence of production deployments, not just pilots

- Sustainable pricing models that scale predictably

- Transparency around funding, burn, and long-term plans

Analyses from CB Insights show high churn among early AI vendors. Betting critical workflows on unstable platforms is not strategic. It is reckless.

Category 8: Legal, Compliance, and Contractual Safeguards

AI contracts require more scrutiny than traditional SaaS agreements.

You should evaluate:

- Liability allocation for incorrect or harmful outputs

- Indemnification clauses related to IP and data usage

- Regulatory support commitments as laws evolve

- Exit strategies and data portability

If your legal team treats an AI contract like a standard software license, pause the process.

AI shifts responsibility in subtle ways. Contracts must reflect that reality.

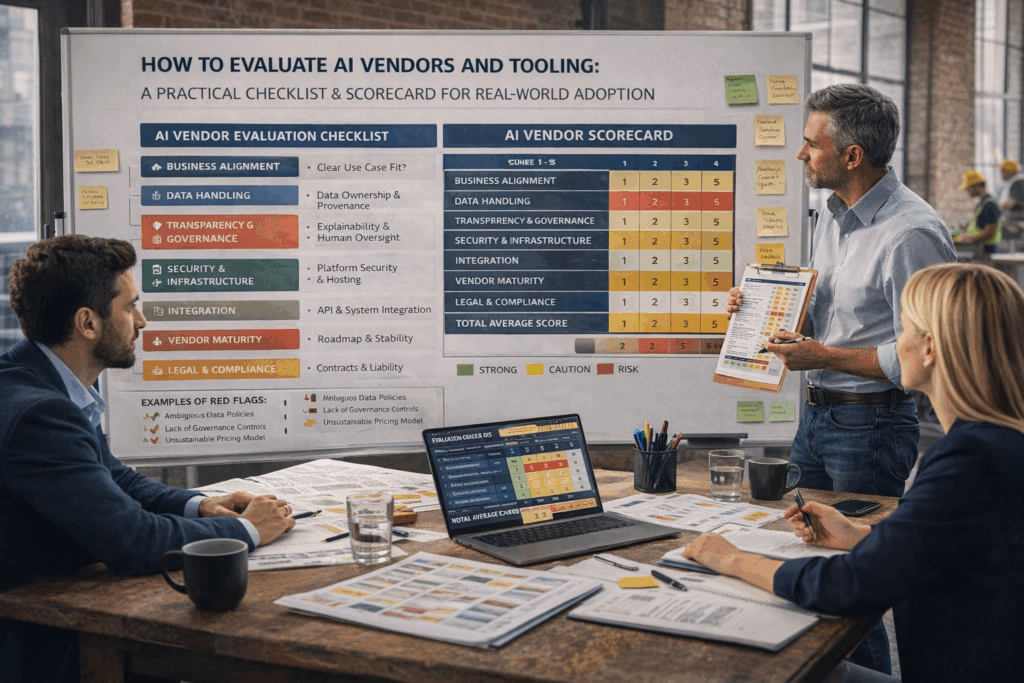

The AI Vendor Evaluation Scorecard

To make this actionable, here is a simplified scoring framework you can adapt.

Score each category from 1 to 5, where 1 indicates high risk and 5 indicates strong readiness.

Suggested categories:

- Business alignment and use-case clarity

- Data governance and ownership

- Transparency and explainability

- Governance and oversight controls

- Security and infrastructure

- Integration and extensibility

- Vendor maturity and viability

- Legal and compliance readiness

A vendor scoring high on demos but low on governance and data handling is not “almost ready.” They are fundamentally misaligned with enterprise reality.

Common Red Flags to Watch For

Some warning signs show up again and again:

- Overreliance on a single foundation model with no contingency

- Resistance to security or compliance reviews

- Ambiguous data usage language

- Lack of customer references in similar industries

- Claims that governance will “slow innovation”

These are not cultural quirks. They are predictors of future pain.

How BizKey Hub Helps Companies Evaluate AI Vendors

At BizKey Hub, we help organizations move past hype and into sustainable AI adoption.

That means:

- Mapping AI tools to real operational workflows

- Running structured vendor evaluations using governance-first frameworks

- Designing scorecards aligned to business risk, not marketing claims

- Supporting pilots that are measurable, controlled, and scalable

AI is not a one-time purchase decision. It is an ongoing relationship between technology, people, and process. Choosing the right vendors is the difference between leverage and liability.

Final Thought

The companies that win with AI are not the ones that adopt the fastest. They are the ones that choose deliberately.

Evaluating AI vendors requires discipline, skepticism, and a willingness to slow down long enough to ask uncomfortable questions. That effort pays off when AI becomes a durable capability instead of an expensive experiment.

If you treat AI vendor selection as a strategic decision rather than a procurement exercise, you give yourself a real chance to get this right.